The new FT polyfill service uses the Fastly CDN to provide faster and more reliable access to Polyfills for everyone. But with responses varying by user-agent, it’s a caching nightmare. We set out to fix this with a little help from Fastly’s engineers and custom Varnish VCL.

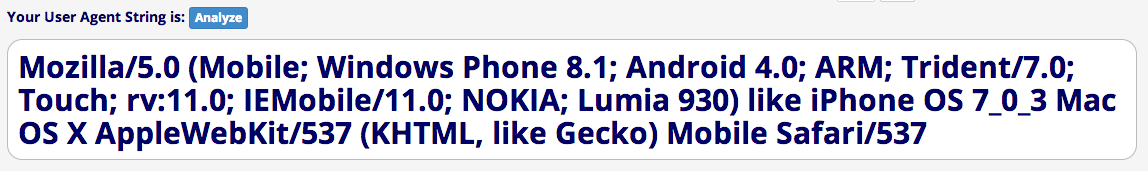

There is so much variety in the User-Agent header that many will only be seen once. The website UserAgentString identifies over 11,000 unique user agent strings, and in fact that’s a huge underestimate (a friend at Akamai told me that they see millions of unique values every day). Worse, they’re impossible to trivially analyse:

The problem is exacerbated because each request can list any combination of polyfills that it wants, which means that if your site wants a unique combination of polyfills, you will not benefit from anything we’ve already cached for other users. And since we often release fixes for polyfills, we can’t even cache the responses for a very long time.

That’s not good enough.

Varnish to the rescue

Fastly are kindly sponsoring the traffic to the FT polyfill service, and I spent some time in the Fastly office in San Francisco last week trying to solve the problem – to improve reliability and performance for all users of the service. One of the great features of Fastly (currently poorly documented, though they assure me they’re working on it!) is the ability to write your own routing logic to run on their edge servers. Fastly uses Varnish, a fantastic open source high performance cache, and it has a straightforward configuration language called VCL (Varnish Configuration Language) which we already use extensively at the FT.

As it turns out, we can use some custom VCL to improve cache performance by separating the user agent from the list of polyfills to be included.

Step 1: normalisation API

First, I added an endpoint to the polyfill service API, to convert raw user agent strings into normalised ones, using the useragent npm module (this is based on browserscope, written by Steve Souders, who coincidentally is now at Fastly). It works like this:

GET /v1/normalizeUa?ua=Mozilla/5.0%20(Macintosh;%20Intel%20Mac%20OS%20X%2010_9_2)%20AppleWebKit/537.37%20(KHTML,%20like%20Gecko)%20Chrome/37.0.2062.120%20Safari/537.40 HTTP/1.1

Host: cdn.polfill.io

Accept: */*

HTTP/1.1 200 OK

Cache-Control: public, max-age=31536000, stale-if-error=32140800

Normalized-User-Agent: chrome%2F37.0.2062

You submit a raw User-Agent, and get back a normalised one (there are a few other headers in the response that aren’t relevant here, so I left them out). The important parts of the response are:

- The

Cache-Controlheader makes the response fresh for a year, and if the normalisation API goes down, stale responses can continue to be used for up to another year. This means that future requests with the same user agent within a year will hit the cached response instantly. - The

Normalized-User-Agentheader specifies the normalised identifer string derived from the submitted UA. This is returned as a header, not as body content, and is URL-encoded, both to make it easier to work with in Varnish.

It’s important that this normalisation is done by the backend app, not in Varnish itself, because although it would be faster to run in Varnish, it would be virtually impossible to keep the normalisation rules in sync between the backend application and varnish, and the consequences of Varnish normalising user-agents differently to the backend is pretty hideous.

Step 2: Write some custom VCL

Now we need to override Fastly’s default behaviour, to enable normalising of requests before passing them to the backend. I created an empty text file and wrote this:

sub vcl_recv {

# Template tag for rules created in the Fastly UI

#FASTLY recv

# Standard Fastly boilerplate (must be included to avoid wiping it out)

if (req.request != "HEAD" && req.request != "GET" && req.request != "FASTLYPURGE") {

return(pass);

}

# If request is to the polyfill endpoint and does not specify a UA on the query string

if (req.url ~ "^/v1/polyfill." && req.url !~ "[?&]ua=") {

# Remember the original request in a header (no variables in Varnish) and rewrite the request as a UA normalisation lookup

set req.http.X-Orig-URL = req.url;

set req.url = "/v1/normalizeUa?ua=" urlencode(req.http.User-Agent);

}

# Pass the request on to the next stage in the Varnish workflow - the cache lookup

return(lookup);

}The sub vcl_recv is the definition of one of the standard Varnish workflow steps. Recv is the first stage which runs when Varnish first receives a request.

There are a few specifics peculiar to Fastly. FASTLY recv is a template tag that Fastly replaces with any config that you’ve set up using their admin UI. I thought this would also be where standard Fastly VCL goes, but actually it is only used to add stuff you configure yourself in the admin tool, so if you haven’t done anything in the UI, it’ll get replaced with an empty string.

The checks for HEAD, GET, and FASTLYPURGE are the standard Fastly VCL for the vcl_recv function. A bit counterintuitively, you have to include this code in your own VCL in order to avoid wiping it out when you upload your own config.

The second if statement is the logic I have added. It says if the request URL is the polyfill bundle endpoint, and the request does not include a ua parameter, copy the URL into a header on the request (so we can remember it, because Varnish doesn’t have variables). Then replace the URL with the normalise endpoint. We also have to URL encode the User-Agent, for which Fastly have a built-in function (which isn’t part of regular VCL).

Finally, we return(lookup) which instructs Varnish to try and find the specified resource in the cache. If the request was a bundle request, it’s now become a normalisation one so that’s what gets looked up.

The lookup may succeed or not, and if it doesn’t Varnish will emit a backend request to the Node app to fetch the resource. Either way, the next step on the Varnish workflow that we’re interested in is vcl_deliver. This happens when Varnish has got a response, either from cache or freshly from the backend, and is ready to deliver it to the end user.

sub vcl_deliver {

# Include any VCL generated from the Fastly UI

#FASTLY deliver

if (req.url ~ "^/v1/normalizeUa" && req.http.X-Orig-URL && resp.status == 200) {

set req.http.Fastly-force-Shield = "1";

if (req.http.X-Orig-URL ~ "?") {

set req.url = req.http.X-Orig-URL "&ua=" resp.http.Normalized-User-Agent;

} else {

set req.url = req.http.X-Orig-URL "?ua=" resp.http.Normalized-User-Agent;

}

restart;

} else if (req.url ~ "^/v1/polyfill..*[?&]ua=" && req.http.X-Orig-URL && req.http.X-Orig-URL !~ "[?&]ua=") {

set resp.http.Vary = "Accept-Encoding, User-Agent";

}

return(deliver);

}I added this to the same file as the vcl_recv function. Again we are defining the function and allowing Fastly to insert any VCL generated from settings we create in the admin UI (with #FASTLY deliver). Then:

- If the URL being delivered is the normalise endpoint, the response is

200 OK, and we have a record of an original URL (ie. the request from the end user wasn’t for the normalise endpoint), then we need to restart the request with the original URL:- Setting

Fastly-force-Shieldensures the restarted request can take advantage of Fastly’s clustering behaviour (a bit weird) - The original URL is reinstated, with an additional query param added to carry the normalised user agent

- The request is restarted, which returns us to

vcl_recv

- Setting

- If the URL being delivered is a polyfill bundle which was originally requested without a

uaparam, add aVaryheader to the response, since downstream caches which don’t have our special logic will need to store a separate entry for each user-agent - Finally, we tell Varnish that it’s OK to deliver the response (we’ll only get to this point if we haven’t restarted)

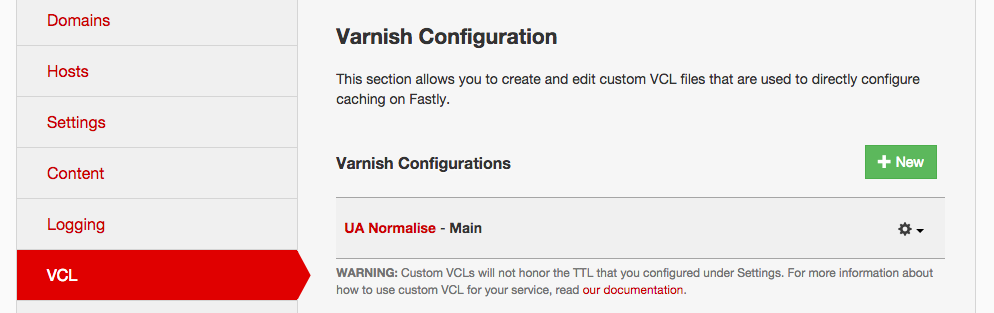

Step 3: Upload it

Now I can upload the finished VCL to Fastly’s admin UI:

- Log in at https://app.fastly.com

- Click configure, and choose the correct app if you have more than one (I have both a live and a test version of the polyfill service, which is a common pattern)

- Click VCL, and then New

- Type a name like ‘UA Normalise’, and select the vcl file from disk

- Click create, then activate the new VCL

Step 4: Try it out

Now, let’s make a request and see what happens:

GET /v1/polyfill.js?features=Array.from HTTP/1.1

User-Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_2) AppleWebKit/537.37 (KHTML, like Gecko) Chrome/37.0.2062.120 Safari/537.41

HTTP/1.1 200 OK

Cache-Control: public, max-age=86400, stale-while-revalidate=604800, stale-if-error=604800

Content-Type: application/javascript; charset=utf-8

Access-Control-Allow-Origin: *

Content-Length: 201

Age: 0

X-Served-By: cache-lcy1120-LCY

X-Cache: MISS

X-Cache-Hits: 0

Vary: Accept-Encoding, User-Agent

/* Polyfill bundle includes the following polyfills. For detailed credits

* and licence information see http://github.com/financial-times/polyfill-service.

*

* Detected: chrome/37.0.2162

*

*/

This response tells us that:

- We got served by

cache-lcy1120-LCY, a Fastly node somewhere in the City of London - The resource was not in cache so was fetched from the backend

- It’s fresh for 24 hours, and after that can be used stale for up to a week to allow asynchronous revalidation via the stale-while-revalidate directive. This is really cool, because it means that probably once a request from an end user has had to wait on a backend once, it will probably never have to do so again, and yet any changes to the polyfills will get into the cache

- It’s also fine to serve a stale response for up to a week if the backend is down, via the stale-if-error directive

- The

Varyheader includesUser-Agentbecause our request did not specify auaparameter.

This is what showed up in the web server logs:

2014-09-25T00:43:15.778324+00:00 heroku[router]: at=info method=GET path="/v1/normalizeUa?ua=Mozilla/5.0%20(Macintosh;%20Intel%20Mac%20OS%20X%2010_9_2)%20AppleWebKit/537.37%20(KHTML,%20like%20Gecko)%20Chrome/37.0.2062.120%20Safari/537.41" status=200 bytes=240

2014-09-25T00:43:15.865318+00:00 heroku[router]: at=info method=GET path="/v1/polyfill.js?features=Array.from&ua=chrome%2F37.0.2062" status=200 bytes=521Notice how neither of the requests that hit the backend matches the URL we actually requested. The first is the normalise request, which receives the full raw UA string, and returns a normalised one (which we can’t see in the log). Then a new request is made that adds the normalised UA to the original request URL.

If we send the request again, modifying the user agent, the backend gets one request – the normalise call for the new user agent string. If instead we change the list of features we want polyfills for, the backend also gets one request: for the new bundle.

Conclusions

Using VCL, you can write some pretty complex logic to run on the edge if you’re using Fastly. But there are some shortcomings in the documentation, so you may need to ask for help. Here are some things I learned along the way:

- Not all responses are cached by default. Fastly only caches 200, 203, 300, 301, 302, 410 and 404s. You can change this behaviour in custom VCL, but it’s worth knowing what the defaults are. I was trying to serve

204 No Contentresponses from the normalise endpoint, and wondering why that didn’t work. - At time of writing the docs say you have to override all the VCL functions if you are going to override any of them. This seems to be a mistake in the docs.

- If you override

vcl_fetch, the behaviour on Fastly will not be the same as if you run your own Varnish, because Fastly runsfetchon a different server to the one it runsrecvanddeliveron. This allows them to scale their infrastructure but means some stuff you set invcl_fetchgets wiped out. I found it easier to stick to the ‘client’ side of the divide, but this would benefit from being documented. - If you send a

HEADrequest instead of aGET(easy to do by accident in cURL by adding a-Iflag) this disables Fastly’s in-POP clustering, which means that you’ll find it hard to get a cache hit, because every time you send a request you’ll likely hit a different node. With clustering on, the resource can be anywhere in the POP and you’ll score a hit. There are some other differences between GET and other HTTP verbs explained in the docs. - You can only restart 3 times. In this particular use case I only need to restart a maximum of once, but it’s worth knowing that the fourth restart will fail and the content will be delivered anyway. This is now mentioned on the custom VCL guide.

- Almost all functions that do normalisation in most programming languages spell it with a z, so in resigned acceptance of our American cousins’ defacto victory over the use of English in syntax, the API for the service spells it with a z. But of course in this post I’ve spelled it with an S. So there.

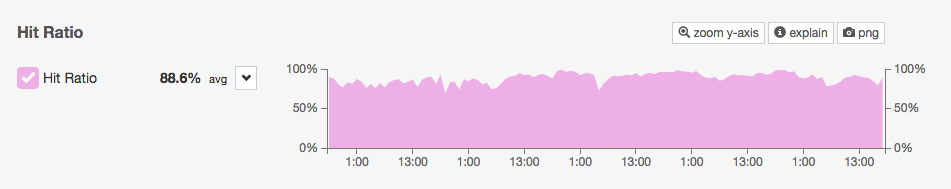

As for the polyfill service, we’re now enjoying a near perfect cache-hit ratio!